I am a third-year PhD candidate in the Department of Electronic and Computer Engineering at The Hong Kong University of Science and Technology, affiliated with the AI Chip Center for Emerging Smart Systems and the Vision and System Design Lab (VSDL), under the supervision of Prof. Kwang-Ting CHENG. A concise version of my experience is available in my CV.

Before joining HKUST, I received my B.E. in Microelectronics from the School of Microelectronics, Southern University of Science and Technology, where I worked with Prof. Fengwei An.

My research interests include software-hardware co-design, AI accelerators, LLM/VLM systems, and 3D processing.

News

- 2026.01: One paper is accepted by CICC 2026.

- 2025.10: One paper is accepted by ISSCC 2026.

- 2025.02: One paper is accepted by DAC 2025.

- 2024.10: One paper is accepted by ISSCC 2025.

- 2024.02: One paper and one poster are accepted by DAC 2024.

- 2022.08: Our second paper of the SLAM accelerator project is accepted by Sensors.

- 2022.08: Our first paper of the SLAM accelerator project, my first paper, is accepted by TCAS-II.

- 2021.12: Our team won the first prize in the 2021 National College Students FPGA Innovation Design Competition.

- 2021.10: Our team won the first prize in the 2021 International Competition of Autonomous Running Robots.

Research Projects

- 5nm UCIe-Enabled Multi-Chiplet Generalizable Rendering Processor (Mar. 2024 - Sept. 2025)

- Designed the architecture and execution scheduler for a 5nm four-chiplet GeNeRF processor, and built an early-stage simulation framework to evaluate buffer management, cross-die source-view caching, dense/sparse D2D transfer, multi-level sparsity control, and hybrid GeNeRF-SR dataflow.

- Developed algorithm-hardware co-design simulation models for projection-area-driven source-view placement, dynamic patch grouping, dense/sparse D2D modes, source-view pruning, tile-level fine-stage sharing, and patch-grained SR routing; used them to validate accuracy-sensitive sparsity and SR decisions and guide hardware-aware end-to-end quantization for silicon deployment.

- Contributed to the implementation of inter-chiplet transfer, chip-level control, and nonlinear computation modules; participated in chip testing and system validation of the fabricated 5nm MCM processor, which integrates the four-chiplet GeNeRF engine in a 45 mm x 45 mm package and achieves 91.43 TOPS/W and 55.43 FPS throughput.

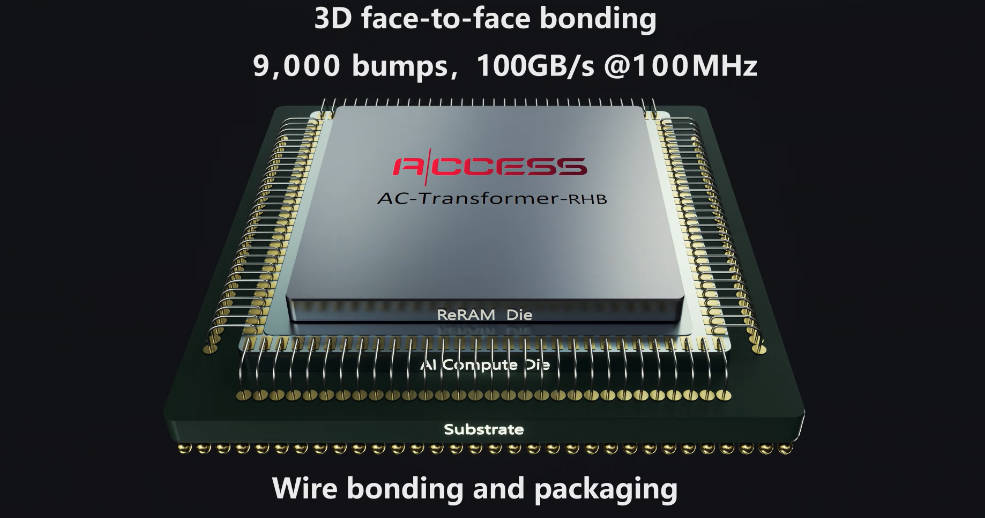

- 55nm ReRAM-on-Logic Stacked LLM Accelerator for Speculative Decoding (Apr. 2024 - Aug. 2025)

- Contributed to algorithm-hardware co-design optimizations for a 55nm ReRAM-on-logic stacked edge LLM accelerator, targeting decoding-stage memory and scheduling bottlenecks with local-rotation-based W4A8 quantization, ReRAM-resident block-clustered codebook reconstruction, and adaptive speculative decoding.

- Implemented and validated the end-to-end algorithm flow, including layer-wise quantized LLM evaluation, codebook-based draft-model weight reconstruction, acceptance/rejection-aware speculative decoding analysis, and hardware-mapping studies that balance target-model EMA reduction against rejected-draft overhead.

- Designed RTL for nonlinear computation modules and related control/datapath logic; assisted chip testing and validation of the 55nm logic die stacked with four ReRAM dies via face-to-face bumps, achieving 14.08 to 135.69 token/s on a 55.98 mm² logic die.

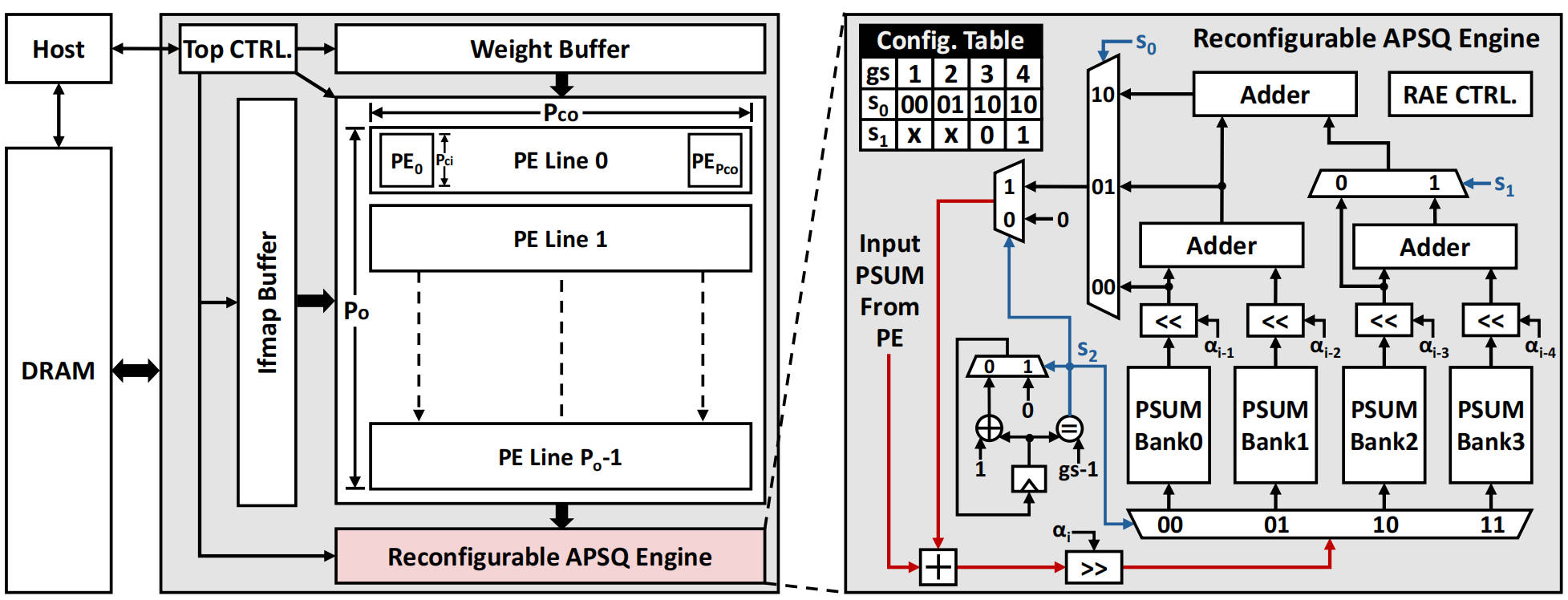

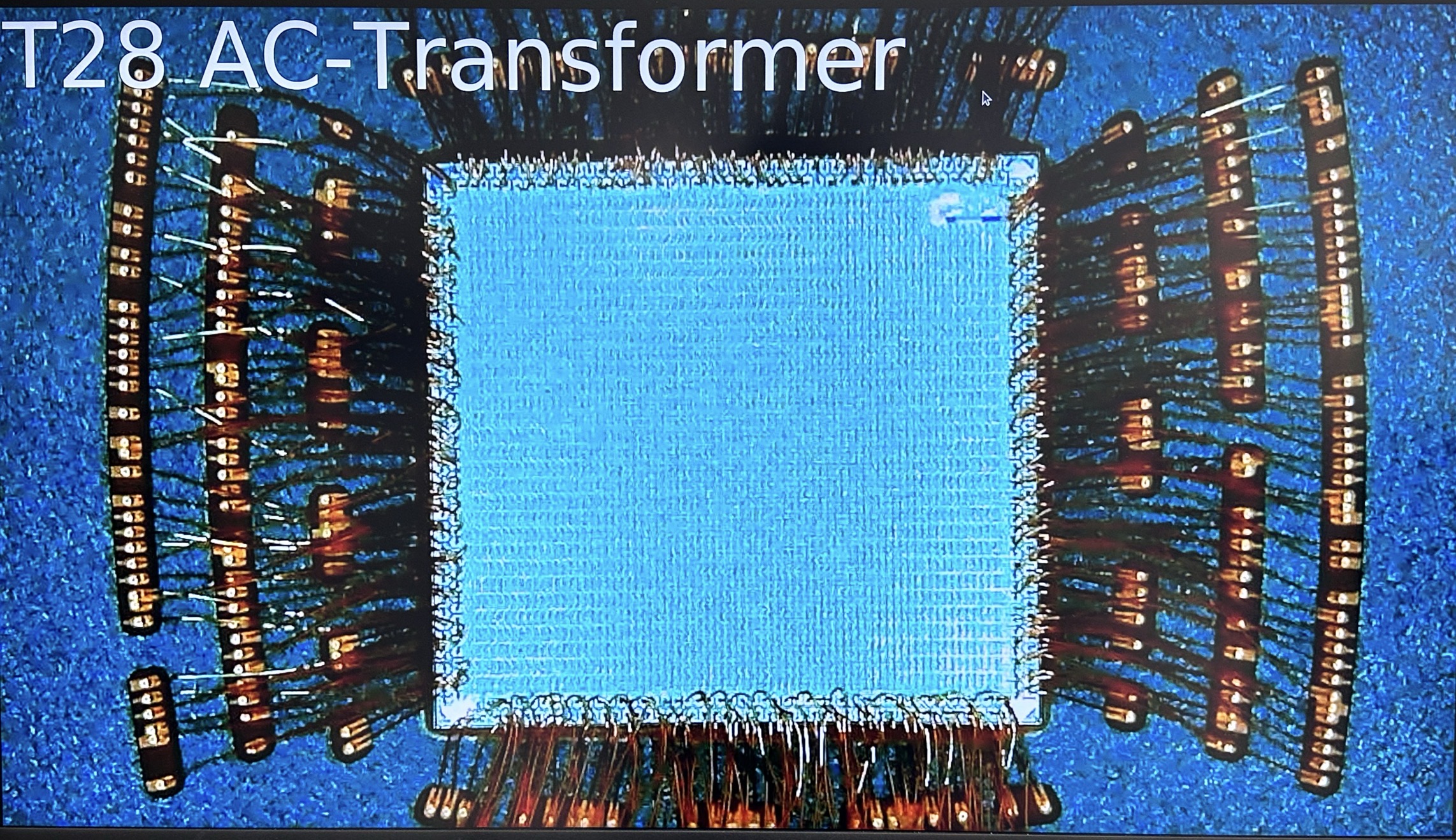

- 28nm CNN-Transformer Accelerator for Semantic Segmentation (Nov. 2021 - Sept. 2024)

- Contributed to the development of algorithm-hardware co-design optimizations for a 28nm CNN-Transformer semantic-segmentation accelerator, including hybrid attention processing, data-reuse-oriented layer fusion, and cascaded feature-map pruning for high-resolution ConvFormer workloads.

- Built a hardware energy simulation framework to quantify optimization impact and guide architecture and tape-out decisions; implemented the algorithm validation flow for VA/LA hybrid attention, KV/weight reuse scheduling, non-overlap layer fusion, and mask-based cascaded pruning.

- Designed RTL for attention/layer-fusion control and pruning-related datapath logic; assisted silicon testing and validation of the 13.93 mm² 28nm chip, achieving 0.22 uJ/token and up to 52.90 TOPS/W peak efficiency.

Publications

A 5nm 91.43 TOPS/W 4-Chiplet Generalizable-Rendering-Processor with UCIe-Enabled Cross-Die-Cache and Balance-Aware Progressive Multi-Level Sparsity

Yonghao Tan*, Songchen Ma*, Pingcheng Dong, Peng Luo, Zhiyuan Lei, Wencai Lu, Guangxi Ying, Man-To Yung, Haibo Zhao, Lan Liu, Yuzhong Jiao, Xuejiao Liu, Yu Liu, Li Li, Luhong Liang, Mao Liu, Kwang-Ting Cheng

Equal contribution.

A 14.08-to-135.69 Token/s ReRAM-on-Logic Stacked Outlier-Free Large-Language-Model Accelerator with Block-Clustered Weight-Compression and Adaptive Parallel-Speculative-Decoding

Pingcheng Dong, Yonghao Tan, Xuejiao Liu, Peng Luo, Yu Liu, Di Pang, Songchen Ma, Xijie Huang, Shih-Yang Liu, Dong Zhang, Luhong Liang, Chi-Ying Tsui, Fengbin Tu, Liang Zhao, Kwang-Ting Cheng

APSQ: Additive Partial Sum Quantization with Algorithm-Hardware Co-Design

Yonghao Tan*, Pingcheng Dong*, Yongkun Wu, Yu Liu, Xuejiao Liu, Peng Luo, Shih-Yang Liu, Xiejie Huang, Dong Zhang, Luhong Liang, Kwang-Ting Cheng

Equal contribution.

Pingcheng Dong*, Yonghao Tan*, Xuejiao Liu, Peng Luo, Yu Liu, Luhong Liang, Yitong Zhou, Di Pang, Manto Yung, Dong Zhang, Xijie Huang, Shih-Yang Liu, Yongkun Wu, Fengshi Tian, Chi-Ying Tsui, Fengbin Tu, Kwang-Ting Cheng

Equal contribution.

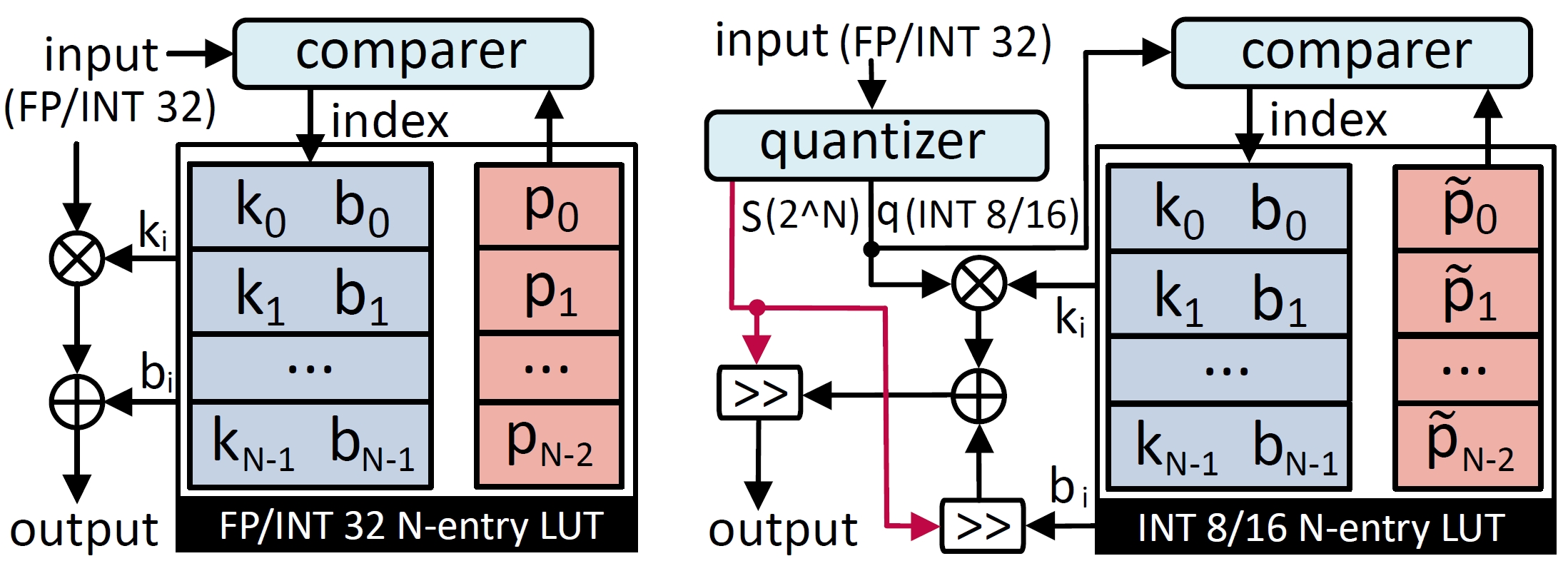

Genetic Quantization-Aware Approximation for Non-Linear Operations in Transformers

Pingcheng Dong*, Yonghao Tan*, Dong Zhang, Tianwei Ni, Xuejiao Liu, Yu Liu, Peng Luo, Luhong Liang, Shih-Yang Liu, Xijie Huang, Huaiyu Zhu, Yun Pan, Fengwei An, Kwang-Ting Cheng

Equal contribution.

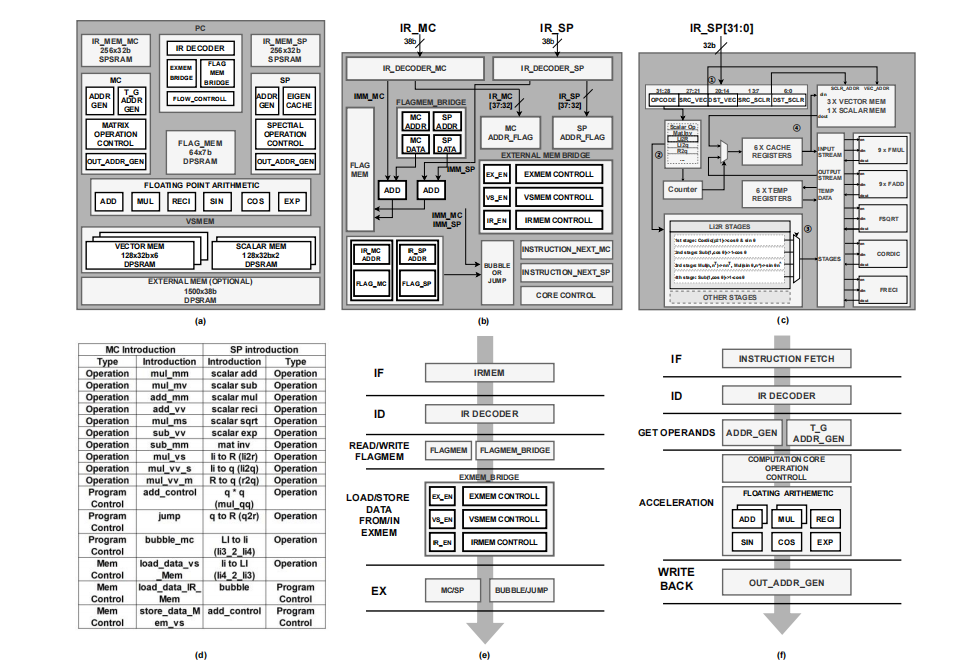

A Reconfigurable Coprocessor for Simultaneous Localization and Mapping Algorithms in FPGA

Yonghao Tan*, Huanshihong Deng*, Mengying Sun, Minghao Zhou, Yifei Chen, Lei Chen, Chao Wang, Fengwei An

Equal contribution.

Honors and Awards

- 2025.08 Best Teaching Assistant Award, Department of Electronic and Computer Engineering, HKUST

- 2023.05 Outstanding Graduate (School Level), SUSTech

- 2022.09 First-Class Outstanding Students Scholarship with the highest score

- 2022.04 Undergraduate Innovation and Entrepreneurship Training Program

- 2021.12 Shenzhen Longsys Electronics Company Award (Top 2% in the School of Microelectronics)

- 2021.12 First Prize, 2021 National College Students FPGA Innovation Design Competition (Top 22 out of 1,341 teams)

- 2021.10 First Prize, 2021 International Competition of Autonomous Running Robots (1st place out of 34 finalist teams)

Education

- 2023.09 - present, Doctor of Philosophy, Electronic and Computer Engineering, The Hong Kong University of Science and Technology, Hong Kong SAR, China.

- 2019.09 - 2023.06, Bachelor of Engineering, Microelectronics, Experimental Class, School of Microelectronics, Southern University of Science and Technology, Shenzhen, China. GPA: 3.77.

- 2016.09 - 2019.06, Shimen Middle School, Foshan, China.

Teaching Assistant

- ELEC2350: Introduction to Computer Organization and Design (2025 Fall)

- ELEC3400: Introduction to Integrated Circuits and Systems (2024 Spring)

- ELEC6910H: Advanced AI Chip and System (2024 Fall)